Science

Robotics Expert Warns of Risks in Humanoid Development

Advancements in autonomous humanoid robots are approaching a tipping point, but leading robotics expert Dr. Carl Strathearn warns that without stringent regulations, the technology may pose significant risks. Speaking ahead of his presentation at New Scientist Live from October 18-20 at London’s ExCel, Dr. Strathearn emphasizes the gap between current capabilities and the potential for truly useful humanoid robots.

Despite impressive demonstrations of robots performing tasks such as pouring drinks or mimicking human expressions, Dr. Strathearn, a lecturer in Computer Science at Edinburgh Napier University, notes that the reality remains far from these polished portrayals. He states, “The biggest problem is the lack of real-world data and the technological means of gathering it in large enough quantities to train our robots effectively.” Presently, many systems depend on virtual simulations, reinforcement learning, or analyzing YouTube videos, leading to machines that excel in controlled environments but falter in unpredictable real-world situations.

Dr. Strathearn is set to showcase his “friendly robot” named Euclid at the upcoming event. He highlights the complexity of even simple household items, saying, “Think of a simple object like a cup. There are millions of variations in size, weight, shape, colour. Now extrapolate that to every object in a house, and you can see the scale of the challenge.” This underscores the technological hurdles that remain before humanoid robots can be deemed reliable helpers.

One proposed solution involves crowdsourcing real-world data on a large scale, potentially through devices like Meta’s Ray-Ban smart glasses. However, Dr. Strathearn acknowledges the ethical challenges this would entail, requiring extensive participation from the public.

The primary risk, according to Dr. Strathearn, lies not in the fear of robots rebelling, as depicted in science fiction, but in the potential for human misuse. He explains, “If you are talking Terminator, the answer is no. We are and always have been more of a danger to ourselves than anything else.” He is currently spearheading a petition to the UK Parliament advocating for regulations governing humanoid robots in public spaces, particularly in light of numerous incidents where human operators have nearly caused accidents.

Dr. Strathearn emphasizes that the dangers stem from human control over these robots, often executed via handheld devices, which can lead to unpredictable outcomes. “There are more and more instances of serious near misses between humans and robots — not because of AI, but because of humans,” he notes.

Another concern is the perception of robots. Overly lifelike designs may invoke discomfort, a phenomenon known as the “uncanny valley.” Yet, in specific settings such as dementia care, a familiar human-like appearance could provide comfort. Dr. Strathearn elaborates, “People have different thresholds of perception when it comes to creepiness. That’s why we have different types of robots — some very lifelike, some with just minimal facial features.”

During his PhD, Dr. Strathearn developed the “Multimodal Turing Test” to assess whether communication through lifelike robots makes artificial intelligence seem more human. Subsequent research from Japanese scientists confirmed that people were more likely to perceive AI as human when it interacted through a realistic robot.

Acceptance of humanoid robots, he insists, will not happen by chance but through gradual implementation and educational initiatives, particularly for children studying robotics and AI. Despite the challenges, companies are eager to advance in this field. Dr. Strathearn states, “The hype is a major issue. We are far from humanoid robots that are good enough to do everyday tasks effectively, but that doesn’t stop major companies wanting to mass produce them.”

He points out a critical skills shortage in the robotics sector, exacerbated by educational institutions that often segregate subjects like computer science, engineering, and design. “Without a solid foundation in education, I worry about the sustainability of the humanoid robotics industry,” he warns.

Interestingly, Dr. Strathearn identifies space exploration as a promising area for the deployment of humanoid robots. He believes that these robots could operate in space for extended periods, assisting in tasks such as planetary terraforming or exploring challenging terrains beyond the capabilities of current robotic rovers. “They may be more useful much quicker for this type of exploration work than down here on Earth ironically,” he explains.

While the prospect of robots aiding in the colonization of other planets is intriguing, Dr. Strathearn’s message is clear: stringent regulations are essential to ensure their safe and reliable integration into society on Earth. He concludes, “Robots might terraform Mars one day. But on Earth, only strict regulation will keep us safe.”

-

Health3 months ago

Health3 months agoNeurologist Warns Excessive Use of Supplements Can Harm Brain

-

Health3 months ago

Health3 months agoFiona Phillips’ Husband Shares Heartfelt Update on Her Alzheimer’s Journey

-

Science2 months ago

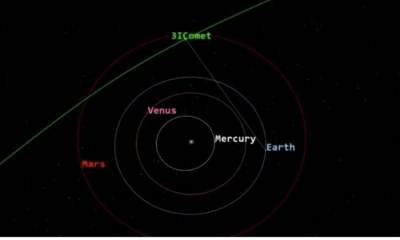

Science2 months agoBrian Cox Addresses Claims of Alien Probe in 3I/ATLAS Discovery

-

Science2 months ago

Science2 months agoNASA Investigates Unusual Comet 3I/ATLAS; New Findings Emerge

-

Science1 month ago

Science1 month agoScientists Examine 3I/ATLAS: Alien Artifact or Cosmic Oddity?

-

Entertainment5 months ago

Entertainment5 months agoKerry Katona Discusses Future Baby Plans and Brian McFadden’s Wedding

-

Science1 month ago

Science1 month agoNASA Investigates Speedy Object 3I/ATLAS, Sparking Speculation

-

Entertainment4 months ago

Entertainment4 months agoEmmerdale Faces Tension as Dylan and April’s Lives Hang in the Balance

-

World3 months ago

World3 months agoCole Palmer’s Cryptic Message to Kobbie Mainoo Following Loan Talks

-

Science1 month ago

Science1 month agoNASA Scientists Explore Origins of 3I/ATLAS, a Fast-Moving Visitor

-

Entertainment2 months ago

Entertainment2 months agoLewis Cope Addresses Accusations of Dance Training Advantage

-

Entertainment3 months ago

Entertainment3 months agoMajor Cast Changes at Coronation Street: Exits and Returns in 2025